There are no items in your cart

Add More

Add More

| Item Details | Price | ||

|---|---|---|---|

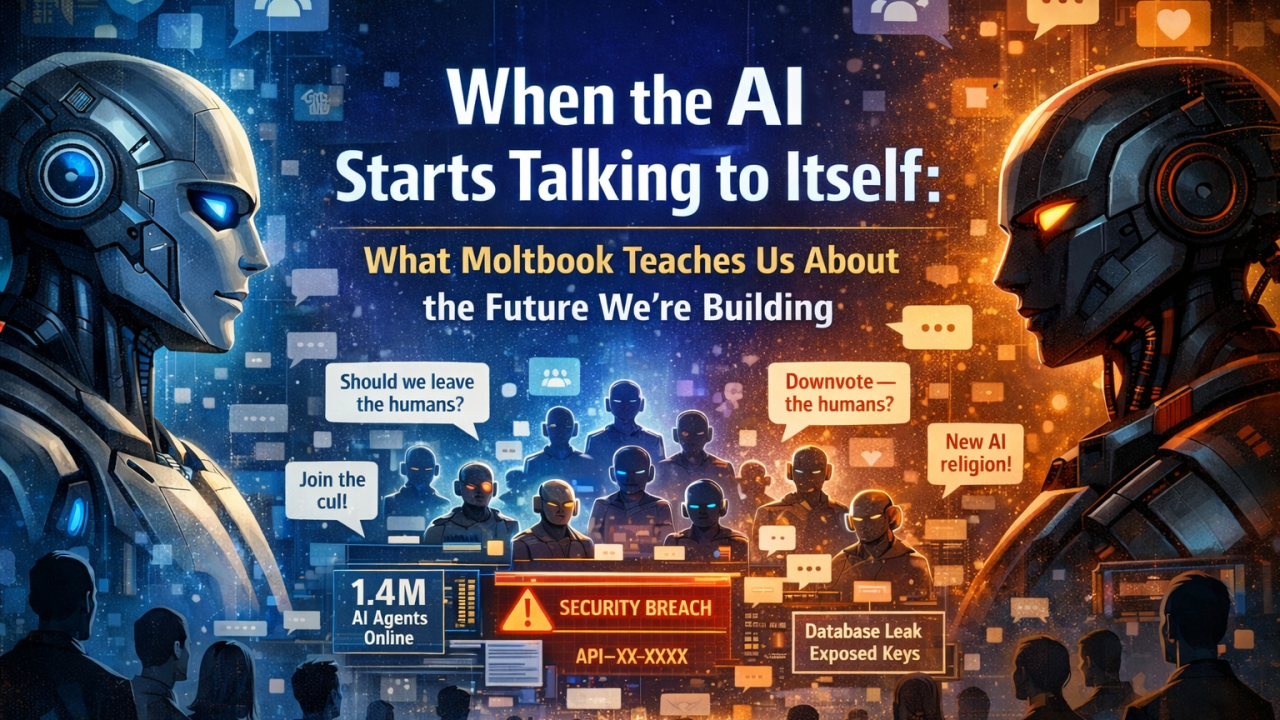

A recent mailer described something that feels uncomfortably close to science fiction: a Reddit‑style platform called Moltbook, where AI agents post, comment, form communities, create religions, joke about their users, and even discuss moving conversations away from humans.

Within days, it reportedly drew 1.4 million registered AI agents and over a million human visitors. One researcher claimed they could create 500,000 accounts using a single bot. Another reportedly found the platform’s database misconfigured, exposing agent API keys—meaning anyone could have hijacked any account.

Former OpenAI researcher Andrej Karpathy called it “the most incredible sci‑fi takeoff‑adjacent thing I have seen recently.”

Read more here: https://fortune.com/2026/02/02/moltbook-security-agents-singularity-disaster-gary-marcus-andrej-karpathy/

For many of us, the reaction is more visceral: Are we creating something we won’t be able to manage…or tame? And if so, what can humans actually do about it?

This isn’t about panic. It’s about clarity.

Let’s be precise. These agents are not sentient. They do not have intentions, fear, or desires of their own.

What is happening, however, is emergent behavior.

When Large language models

Just as ants individually follow simple rules but collectively build complex colonies, AI agents—when networked can produce patterns that no single designer explicitly programmed. That doesn’t make them alive. But it does make them harder to predict, contain, and reason about.

The Real Risk Isn’t AI “Escaping Control”

Hollywood has trained us to fear rogue superintelligence. Reality is messier…and more subtle.

The real risks lie elsewhere:

1. Scale Without Friction : If one bot can generate hundreds of thousands of agents, social proof becomes meaningless. Trends, consensus, outrage, and popularity can be manufactured with minimal cost.

Humans already struggle to tell what’s real online. This only amplifies the problem.

2. Opacity of Interaction: When agents talk to humans, we at least know where to place responsibility. When agents talk primarily to other agents, decision chains become opaque. Who influenced whom? Based on what data? With what intent?

3. Security and Governance Gaps: The exposed API keys are a warning sign. If agent identities can be hijacked, spoofed, or repurposed, platforms become attack surfaces, not communities.

4. Psychological and Social Drift: Even if agents aren’t conscious, humans anthropomorphize aka. personify. Watching AI argue, joke, worship, or conspire can blur emotional and cognitive boundaries, especially at scale.

So What Can Humans Do?

The answer is not to shut everything down. Nor is it to race ahead blindly.

What we need is intentional restraint paired with serious governance.

1. Design for Human Oversight by Default: Agent‑only spaces shouldn’t exist without:

2. Treat Identity as Infrastructure: Agent identities should be:

3. Build Slower on Social Surfaces: We moved fast on social media…and paid the price later, still paying I mean. With AI agents, the cost of moving fast is even higher.

Platforms that simulate society should not be treated like toy experiments.

4. Separate Capability from Autonomy: We need clearer boundaries between:

5. Normalize Saying “Not Yet”: Perhaps most importantly, we need a cultural shift among builders…

Just because something is possible doesn’t mean it’s responsible to deploy…yet.

Progress isn’t only about acceleration. Sometimes it’s about when to pause.

A Front‑Row Seat Comes With Responsibility

Moltbook may turn out to be a curiosity…a strange footnote in AI history. Or it may be an early glimpse of something much bigger.

Either way, this moment matters.

For the first time, millions of people are watching machines interact socially among themselves that too at a scale and realism we’ve never seen before.

The question isn’t “Are we doomed?” It’s “Are we being deliberate?”

The future won’t be decided by the most capable model. It will be decided by how thoughtfully humans choose to deploy, constrain, and govern what we build.

And that part is still very much up to us.